Quite frequently in science and engineering, our daily progress gets blocked because of dependencies on other items: whether we need information from co-workers, access to laboratory equipment, or sometimes even lab supplies. We all know that our efficiency, and associated work satisfaction, is boosted when we can make steady progress towards our goals, without having to wait on others. We don’t want to be hindered.

After working for many years in life science and diagnostic applications, I have come to realize that we should recognize that there are too many blockers for us to get work done, and we should try hard to identify and remove these to become more efficient.

In this post, I will try to show how the use of modern computing tools can help resolve dependencies, specifically those related to lab automation equipment, and how these tools will help more people become more productive.

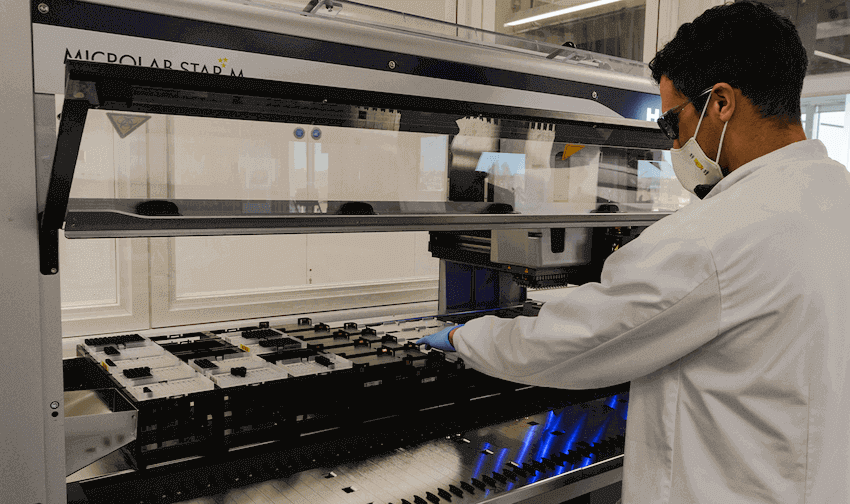

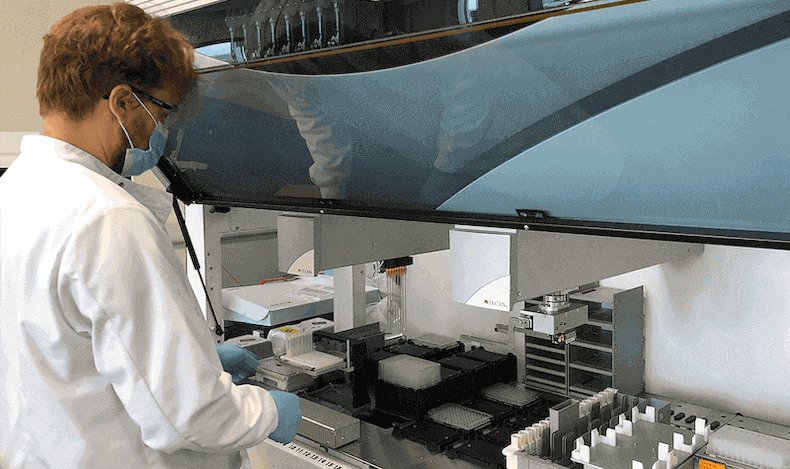

Interaction with automated liquid handlers is often cumbersome and daunting for the end user. One of the key problems is that to program these devices you need to be physically present in the lab. This is a nuisance: not only may the equipment be occupied by somebody else, forcing you to wait your turn, but it is also difficult to come in, not trivial with COVID restrictions forcing limited lab occupancy and remote working. This is particularly a nuisance when you want to prepare an experiment for the next day.

To address these concerns, hardware vendors sometimes provide “Simulation Tools” that you can install on your local machine or your laptop. However, these tools lack functionality. Firstly, it’s a pain to get the correct drivers installed, even in simulation mode - to do so you have to understand how drivers work, which creates yet another dependency. Secondly, it’s very difficult to create an exact copy of the liquid handler configuration in the lab in terms of labware definitions: you may well end up with slightly different definitions on your “not-so-carbon copy” which may set you back when you eventually run the experiment in the lab.

The need to plan on the lab computer can be resolved by more modern and sophisticated software tools that allow you to work with exact in silico replicas of the instruments and that are smart enough to bypass driver commands when needed.

Another related, yet massive problem with liquid handling software today is its dependency on the operating system of the host PC. Windows versions tend to change and before you know it, your IT department will require you to install the latest Windows version, yet the software controlling your lab equipment may not be compatible with that version. This can introduce even more delays to a scientist’s work.

Fortunately, there is an obvious solution for this: cloud computing. Cloud software is inherently independent of operating systems. In many areas of science, engineering, and also in our daily lives, cloud computing has taken off dramatically, but why not in scientific labs? While we run Microsoft 365, Google Suite, Dropbox, AWS, JIRA, Miro, and a zillion other apps in our browsers, why should we run lab software as if we were still in the last century?

Third, there is a massive problem with the testability of your liquid handler scripts and methods, which creates a gigantic burden. If you can’t reliably test your scripts offline, you are due for more “dry and wet” runs (runs with colored water on deck, for example).

The software world has made tremendous progress in making it easier for software developers to verify their code: (1) debugging tools let you step through your code, (2) dedicated “Integrated Development Environments”, like pyCharm or Visual Studio, help you spot errors and highlight your syntax, and (3) version control keeps track of all the changes you make.

None of these functionalities exist in liquid handling control software in an accessible way. Without testability, we have a massive dependency: more lab time needs to be scheduled to run your methods, and you will have to invoke your local automation expert at some point in order to verify your methods. Again, we have another two dependencies.

Lastly, because liquid handling software uses low-level commands like “Aspirate”, “Dispense”, “Mix”, and “Transfer Labware”, you have to build everything from the ground up as an end user. There is a big gap between what the end user wants to do and how to achieve that using liquid handling programming. As end users, we think in modular terms like (serial) dilutions, samples, reagents, and reactions - not so much in terms of low-level instructions “Aspirate” and “Dispense”.

In conventional programming, the low-level instructions are often abstracted away into more meaningful commands through library packages. Python’s Pandas is a good example here, and so is Excel: instead of requiring users to code in Visual Basic, developers have introduced functions that allow you to do things like calculating a linear regression relatively straightforwardly.

In science labs, this gap between end-user thinking and low-level thinking results in having to rely on the local automation expert. Many lab users would rather not always be dependent on automation experts. In turn, while being enthusiastic about automation uptake, automation “ninjas” (local experts) usually want to work on more impactful problems than hand-holding end-users, so they also wish end users were more independent.

Even relatively simple tasks such as updating protocols require ”ninjas” which is a dependency that hurts efficiency. It is too difficult today to program liquid handling robots.

There is, however, really good news: modern computing tools can mitigate many of these dependencies:

- Using cloud computing, we will be able to program, design, and verify our experiments from anywhere - and with many people doing so simultaneously, not one at a time like in the physical lab. If we decouple experiment planning from experiment execution, the execution software can be more “basic” and therefore hopefully be less dependent on the operating system of the host PC.

- Independent and robust experimental planning also gives room for a proper preview of all liquid handling actions and thus an in silico verification of your experiment prior to going to the lab.

- Using the browser, a modern look and feel is achieved. Access to modern cloud computing stacks allows us to abstract low-level “Aspirate” / ”Dispense” commands into higher-level modules like “Dilute”, “Normalize”, “Aliquot”, or “Combine Liquids”.

- Cloud computing has the additional benefit that versioning and backup are automatically included, as well as user logging and change management. In addition, the modern cloud stack helps support moves toward greater adherence to FAIR data practices (Findable, Accessible, Interoperable, Reproducible).

I have been working on one such platform at Synthace for the past three years and think that Synthace has really hit the nail on the head with its cloud platform. Through its “elements” - the building blocks that represent individual experimental steps in the visual interface - users can think at a higher level, while the underlying high-performance computing engine translates these high-level instructions into low-level robot instructions.

The preview feature that Synthace offers is literally game-changing. Unlike a rendered 3D image of the actions where you see a lot of robot actions but hardly any liquid level information, Synthace’s preview allows you to methodically step through your entire experiment and inspect each liquid handling action. You can view which liquid goes where as well as exact transfer parameters (e.g. mixing steps, wet vs free transfers, or the liquid class used). Since Synthace calculates all liquid handling actions, it also tracks full sample provenance and can thus determine how much of each liquid you need.

Synthace extracts all relevant information from your liquid handler so accurate instructions can be calculated. These instructions can be sent to a piece of software that runs on the lab computer. The drivers that Synthace built are quite light touch and feature very little dependencies on the operating system. On top of that, Synthace has built a very strong data alignment functionality, for end-to-end usage, so that data from e.g. plate readers, qPCR or HPLC instruments can be automatically associated with the samples that were sent to these devices and the history of operations these samples went through.

Other posts you might be interested in

View All PostsSynthace just got a whole lot better: 4 improvements biologists need to hear about

Miniaturized Purification with Synthace and Tecan Te-Chrom™: Uniting Robust Execution With Flexible Planning and Comprehensive Data Analysis

Liquid Handling Robot Performance Monitoring – Why is it important?